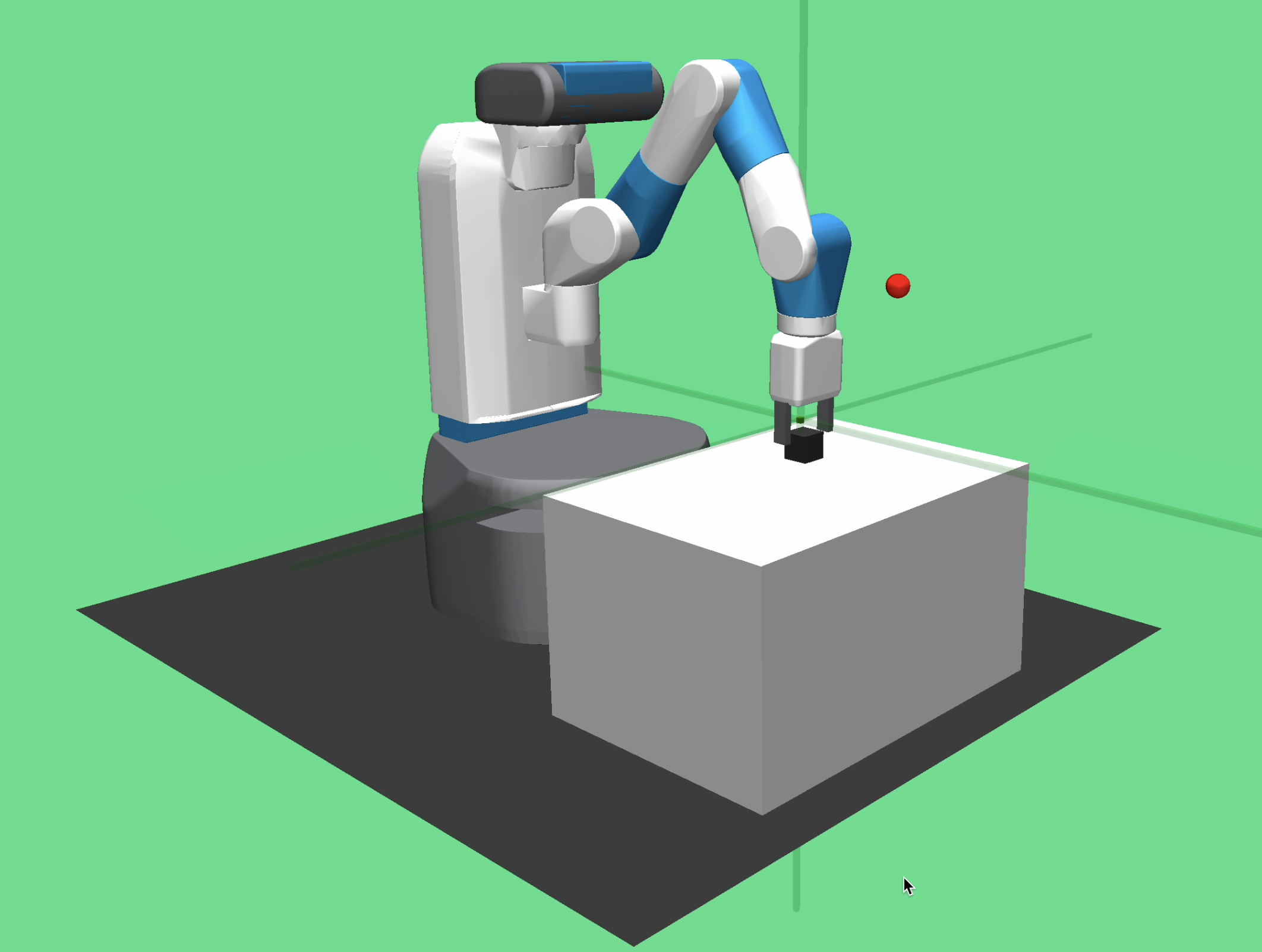

Lessons Learned from Implementing DDPG HER Robot Fetch and Place

Mar 7, 2026

I had been trying to implement the DDPG+HER algorithm for the robot fetch and place task for almost a whole week, and didn't succeed until the last day. It's my first time to implement a reinforcement learning algorithm from research paper, and while the paper is released for almost 10 years, there are still only a handful of implementations that can be found on the internet. I will have a brief introduction about the algorithm and then share some of my takeaways about it.

DDPG and HER

DDPG (Deep Deterministic Policy Gradient) can be understood as Deep DPG, which uses deep neural network approximator based on the actor-critic architecture (DPG). For HER (Hindsight Experience Replay), the core idea is to make sure the model can learn from the failure reward when training using samples. (There are pretty much introduction about the algorithm on the internet, so I just illustrate it in the way that I understand it.)

What I learned from this

1. Coding is becoming more trivial now, but you still need to understand what each line of code is doing and know how to amend it. The current ability of copilot and codex can allow you to avoid almost 100% repeatitive coding and generate runnable code with low error rate according to your prompts. However, it will still generate code that either causes error or not totally aligns with your intention (although the chance is low), which needs your at least somewhat understanding of the code to debug it. I think that's probably the main technical gap between coder and non-programmer now.

2. Don't trust unverifiable advices from AI. During the parameter tuning process, AI like codex had given me numerous advices about how to improve the successful rate. Some are good advices because they are verifiable (e.g. you can make the same conclusion based on your understanding or publicly available knowledge), but a lot of others are either unverifiable or provide marginal performance improvements, which won't lead you to the correct direction. I think the best way to avoid the bad advices is to try to have a deep understanding of the details of the framework. If there are still unknowns beyond the scope of human knowledge among those details, follow your intuition[1].

3. Computing power might be the potential bottleneck for research. I do have heard the scaling law for neural networks before, but this time I really witnessed how huge the difference is if I can shorten the time for training. It certainly took me some time to understand the algorithm and code, but the remaining training time is a main obstacle to achieving the satisfying performance. If there are negligible time costs for each training, theoretically you can just largely increase the number of episodes and it will almost certainly have a good result. In fact, it might be the main reason why we are so prudent about the parameters and pay so much effort to design effective models.

Questions and future work

1. I still have no idea about how to design a novel algorithm for reinforcement learning. Although I know almost every detail about the DDPG and HER algorithm, I feel like I learned nothing about the principle behind the algorithm per se. But the HER paper inspires me that maybe the best way to design a algorithm is to think in a naive way, for example, the original goal of HER is to learn from the failure reward, which is very intuitive.

2. Try more state-of-art algorithms and try to deploy it into a physical robot. I hope by comparing different algorithms I can have better taste about how an algorithm is designed and what a good algorithm lookslike. Also I want to encounter more techinical difficulties and solve them by deploying it into a physical robot.

3. Math notations review. Specifically, read the mathematical notations in the reinforcement learning book by Sutton and Barto. If I have any questions, refer to the math textbook such as linear algebra and probability theory books.

Results

I achieved a successful rate of 90% after training for around 230 epochs, with the parameters mentioned in the paper (you can see the experiment details in the appendix), the other parameters that didn't mentioned in the paper are mostly from this github repository.

[1] In coding and mathematics problems, it's almost certain that the answer will have binary results -- true or false. But the more complicated the decision making environment is, the harder it is to say whether a decision has absolute correctness. That's actually a good news for human beings, because no matter how advanced AI is, it will never help us make decisions that is correct by all measures. If AI we designed is aligned with human will, then the irreplaceable part of human beings will surely include the decision making part in the real world. ↩

Last modified on Mar 8, 2026